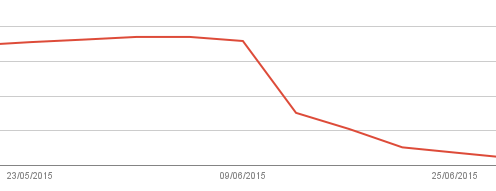

For a several weeks before. The Google Webmaster reports has been forgotten. So I wasn’t encountered the blocked resources from GoogleBot which was increased everyday causing of Google Panda 4. With its ‘Fetch as Google’ feature within Google Webmaster Tools (GWT), it shows how these blocked resources matter.

The blocked resources follows the robots.txt directives for all files that Googlebot fetches, include JavaScript, CSS and image files. If your site’s robots.txt file disallows crawling of these assets, it directly harms how well their algorithms render and index your content.

In this post, I will explain how can I diagnosing blocked Googlebot issues and solve them with Google Webmaster Tools.

Here is mine:

How to check what resources are blocked

Fortunately, Google Webmaster Tools can help you find the problem that may be blocked GoogleBot accessing your site. From Search Console Dashboard, navigate to Google Index > Blocked Resources.

At this section, you will find how many pages with blocked resources which listed below. Choose the main domain to open a list of blocked resources. At here, you can review them and find why it’s blocked.

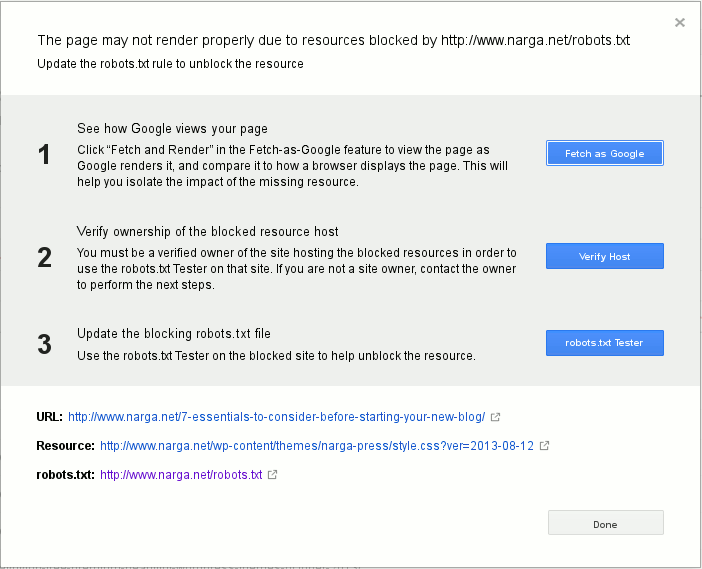

In my case, the file style.css denied GoogleBot accessing, so the page won’t render correctly. Continue click on it, I find a list of pages has partial rendered. I choose the first item, it open a windows with information and suggested actions to help me solve this problem:

Finish 3 steps like figure above then check and fix another blocked as Google recommended.

Solve this problem is easy but you need a few weeks to allow Google re-index and update your website status.

There are steps that I’ve done to prevent it happens again in future:

- Handling resources blocked by

robots.txt: Allowing the bots to index anything and everything in your site, can actually harm your sites rankings. If you using WordPress, I unblockedwp-includesfolder where has default scripts & files which used by many themes and plugins. The next position iswp-contentwith the same reason. Here is my newrobot.txt:User-agent: * Disallow: /wp-admin/ Disallow: /trackback/ Disallow: /xmlrpc.php Disallow: /go/

As you see, I blocked

wp-adminfolder,trackbackandxmlrpc.phpfor prevent spam,/go/for our affiliate links.

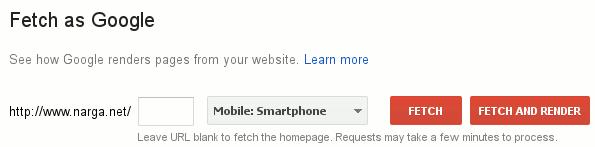

Remember, If Google crawls that these resources are blocked, then Googlebot can’t use them when it renders those pages for search. - Make sure that Googlebot can crawl your JavaScript, CSS and image files by using the

Fetch as Googlefeature in Google Webmaster Tools.

Fetch and Render

Google Webmaster Tools shows screenshots rendered both as Googlebot and as a typical user, if the problem has fixed, you will got the result similar the image below:

Fetch and Render Result

After solving the mobile usability issues, you can Submit to index, because the changes you made can influence your rankings in search results. Submit to index will make Google crawl your new changes and index your page faster. This button only appeared beside the Status result, after the render processed.

You have two choices:- Crawl only this URL: You are allowed to submit only 500 URLs per month.

- This URL and its direct links: You are allowed 10 submission per month for this option.

- Be sure your website pass the Mobile-Friendly Test. In Mobilegeddon, it’s very important because Google announced that mobile-friendliness will become a ranking factor in the mobile search results on April 21, 2015. With this update, GoogleBot now has a smartphone, it’s using to visit and indexing your website, haha.

- Guarantee of technical quality for running your website flawlessly, some common problems causes GoogleBot is indexing your website hardly like DNS, Firewall, Over-Optimize for Server Configuration…

TIPS: Don’t include your website’s XML sitemap in

robot.txtbecause Google doesn’t interested with spider’s arguments that available in its.

NOTES: If you using MaxCDN service, be sure their custom robot.txt wasn’t activated or its content has the same with your original file on your server.

To verify it, login to MaxCDN, navigate to Contact Zones then Manage, find Robot.txt section in the SEO tab, if it was enabled, review and update its content. Wait a few minutes before Fetch and Render again.

hello Narga,

i don’t understand how to remove blocked resources.

last time when i check there was 400 links was blocked because of “disallow wp-include” line, so i remove disallow wp-include from robot file. allowing it.

but now after 2 weeks block links are 670, i don’t understand what to do?

i check render and fetch and all pages layout are not affected showing perfectly but not showing ads banner and other minor code are banned.

so confused now, does increase link affect websites seo or there is no problem if increase links.

please help on these.

Have you resubmit the sitemap? Do you recheck it with my guid above?

It requires at least 2 weeks to get the new result. Just wait more

Hi Narga,

That is correct already submitted so many times but not clear by blocked resources.. please clearly tell how to remove Blocked resources …